All creators

Manifold AI Learning

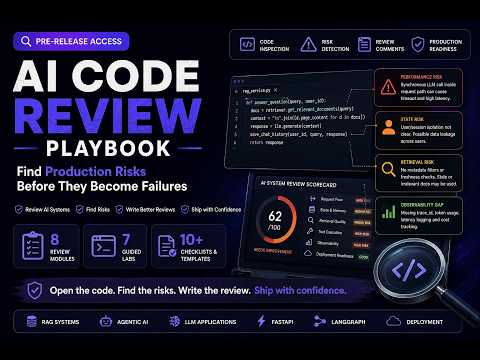

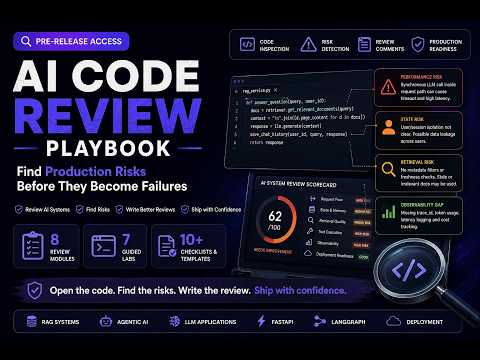

AI systems engineering and RAG architecture with a focus on production-grade implementation.

Nutrition Label

The creator provides high-quality, technically rigorous content focused on the practical engineering challenges of building production-grade AI systems.

Strengths

- +

- +

- +

Notes

- !Focuses on production-grade AI implementation with clear, actionable technical breakdowns.

- !Check the video description for links to the creator's educational resources and bootcamps.

Why this score

Rigor & Evidence · 14:15

“The wrong chunks were getting reranked first, and it was happening in a consistent manner on the informal queries.”

Demonstrates specific, evidence-based root cause analysis of a technical failure.

Open receiptTrust Breakdown

Evidence Quality

8.0Firsthand Access

8.2Analysis Quality

7.2Depth & Nuance

8.0Disclosure Clarity

7.0Framing Integrity

10.0Explainer Lens: Reasoning and depth carry more weight.

Medium confidence. Based on 5 long-form videos.

These six Trust Core outputs drive the public creator rating. Communication affects discovery ranking separately. Methodology →

Recent Videos

Pending

The Right Order to Learn Agentic AI in 2026

Jun 5, 2026 • 609 views

Pending

NVIDIA Certified Agentic AI - NCP AAI Is Useful — If You Use It Right

Jun 4, 2026 • 227 views

Pending

Your AI App Works. But Does It Look Senior?

Jun 4, 2026 • 130 views

Pending

You Built the AI Project. Why Can't You Explain It?

Jun 3, 2026 • 464 views

Pending

The Agentic AI Codebase Looked Fine. It Wasn't.

Jun 3, 2026 • 163 views

Pending

Your Agent Worked for 5 Prompts. That's Not a Test. - Agentic AI Reality

Jun 2, 2026 • 208 views

Pending

RAG, Agents, or Fine-Tuning? It's Not a Popularity Contest

Jun 2, 2026 • 312 views

Pending

Senior Engineers Don't Start With a Framework. Here's What They Do First.

Jun 1, 2026 • 321 views

Pending

The Real Cost of an Agent Call — Beyond API Pricing, What You’re Actually Paying

Jun 1, 2026 • 398 views

Pending

The Real Gap: Decision-Making Under Constraints - Agentic AI

May 30, 2026 • 216 views

Pending

I Deployed an AI Agent on AWS — Here’s What Broke First

May 29, 2026 • 373 views

Pending

Agentic AI architecture: You are doing it wrong

May 29, 2026 • 484 views

Pending

AI Architect System Design — Completion Celebration & Next Step

May 29, 2026 • 292 views

Pending

Supervisor vs Orchestrator vs Agent-as-Tool: Who Is Actually in Charge? Agentic AI Systems

May 28, 2026 • 352 views

Pending

Why RAG Must Be Understood as a Connected System, Not Separate Components - AGENTIC AI INTERVIEW

May 27, 2026 • 217 views

Shareable card

A compact ReReview card and short URL for sharing this trust score.

Manifold AI Learning

ReReview Trust Score

7.9

Worth Prioritizing

rereview.co/c/manifold-ai-learning

Canonical: rereview.co/creators/manifold-ai-learning

Categories

AI AssistantsAutomation & AgentsCoding ToolsDeveloper PlatformsProductivityWorkflow Tools

Formats

TutorialsDeep Dives

Links